Intel, SambaNova Planning Multi-Year Collaboration for Xeon-Based AI Inference

As AI workloads become more diverse and complex, organizations are looking for different solutions for different needs. This is driving demand for more heterogeneous infrastructure—built on diverse compute, memory, networking and a consistent software foundation—to support inference at scale across the data center.

Today, SambaNova and Intel announced a planned multi-year strategic collaboration to deliver high-performance, cost-efficient AI inference solutions for AI-native companies, model providers, enterprises and government organizations worldwide, built around Intel® Xeon® based infrastructure. Intel Capital is also participating in SambaNova’s Series E financing round.

For customers with AI workloads well-suited to SambaNova’s approach, the combination of Intel CPUs and SambaNova’s AI platform can provide a compelling rack-level inference option as Intel’s GPU-based solutions come online.

This collaboration complements Intel’s existing data center GPU commitments and does not alter its path forward to competing in AI. The company continues to invest across GPU IP, architecture, products, software, systems and strengthen its roadmap as part of its edge-to-cloud AI engagements.

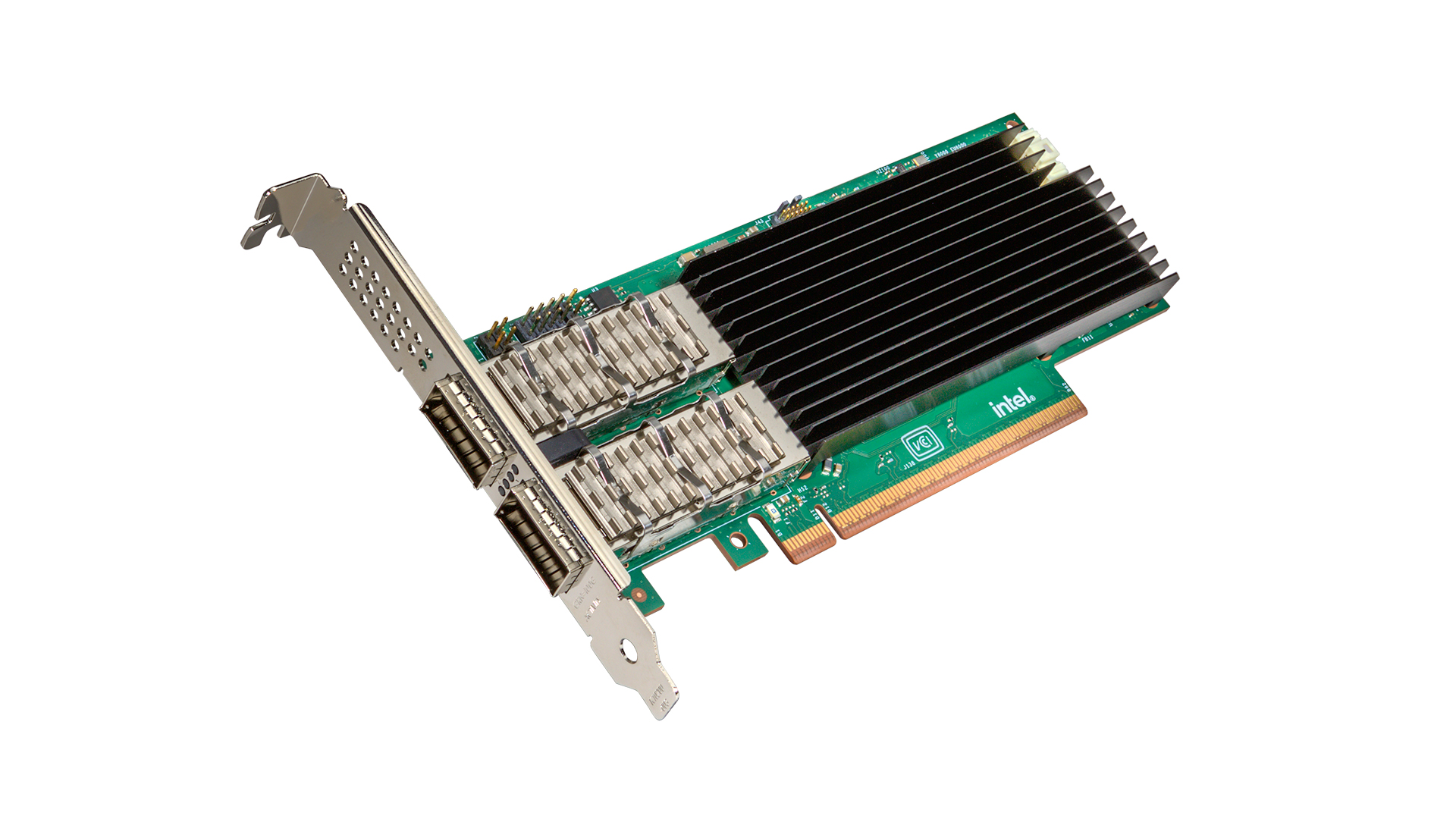

Together, Intel and SambaNova aim to help shape the next generation of heterogeneous AI data centers, integrating Intel Xeon processors, Intel GPUs, Intel networking and storage, and SambaNova systems—to unlock a multi-billion-dollar inference market opportunity

More details are available here.