Rockets to Retail: Intel Core Ultra Delivers Edge AI for Video Management

At Intel Vision, Network Optix debuts natural language prompt prototype to redefine video management, offering industries faster AI-driven insights and efficiency.

On the surface, aerospace manufacturers, shopping malls, universities, police departments and automakers might not have a lot in common. But they each collectively use and manage hundreds to thousands of video cameras across their properties.

Another thing they have in common is Network Optix. The global software development company, headquartered in Walnut Creek, California, specializes in developing platforms that allow industries to manage, record and analyze huge amounts of video data. Working with Intel, Network Optix has optimized its software with the Intel® Core™ Ultra 200H series of processors in mind. With robust central processing units (CPUs), integrated neural processing units (NPUs) and graphics processing units (GPUs), the Intel processors, code-named Arrow Lake H, are designed to handle this type of complex AI at the edge.

Press Kit: Intel Vision 2025

IP, or internet protocol, cameras transmit and receive data over a computer network. They’re the typical surveillance cameras you might see at government buildings, around shopping centers or on college campuses. They feed into a central database that a security guard or operations center can monitor.

Artificial intelligence (AI) is changing the way companies and organizations think about and use video data that goes well beyond security. With AI, cameras along manufacturing assembly lines can count products and tell businesses how many are produced per day and when they were packaged. AI models can alert managers to where production might be slowing or when and how defects occur. And AI camera vision geared toward health and safety can set alerts when employees are not wearing proper protective gear.

Processing at the Edge Makes the Difference

Network Optix software can crunch all this video data in the cloud, but the big advantages and speed are seen via a server on premises, taking advantage of the power efficiency and overall performance of Intel Core Ultra 200H processors that were designed for edge AI applications. Unlike large data centers with dedicated AI infrastructure, edge AI deployments must seamlessly integrate into pre-existing IT systems in space-constrained, low-power and cost-sensitive environments. They’re not only processing AI there, but also the compute, video and graphics workloads.

“You can search a whole year of data in several seconds,” says James Cox, vice president of Business Development at Network Optix. “It was a customer pain point that it took a long time to load up an archive and look through it. The old systems were slow to aggregate data around the world for a global company.”

Network Optix’s technology is designed to be highly scalable and capable of handling large numbers of cameras and vast amounts of video data. The company currently has customers collectively using 4.5 million cameras globally across its system.

“If you tried to run AI in the cloud or somewhere else that wasn't your local premises, you’d be streaming a lot of data to the cloud. It’s about 5 megabytes per camera per second. If you have a hundred cameras, you're now pushing 500 megs constantly up to the cloud, and a lot of sites have thousands of cameras, so it’s just not doable,” Cox says. “By running it at the edge, you turn it into just pure data, and you can then look at all of that and get alerts on it.”

Those aerospace manufacturers? They’re some of Network Optix’s biggest customers. Think about a rocket launch … and the number of cameras required to provide an uninterrupted live feed of all areas of the rocket to engineers and the control room. The real-time information they capture is crucial to a successful launch.

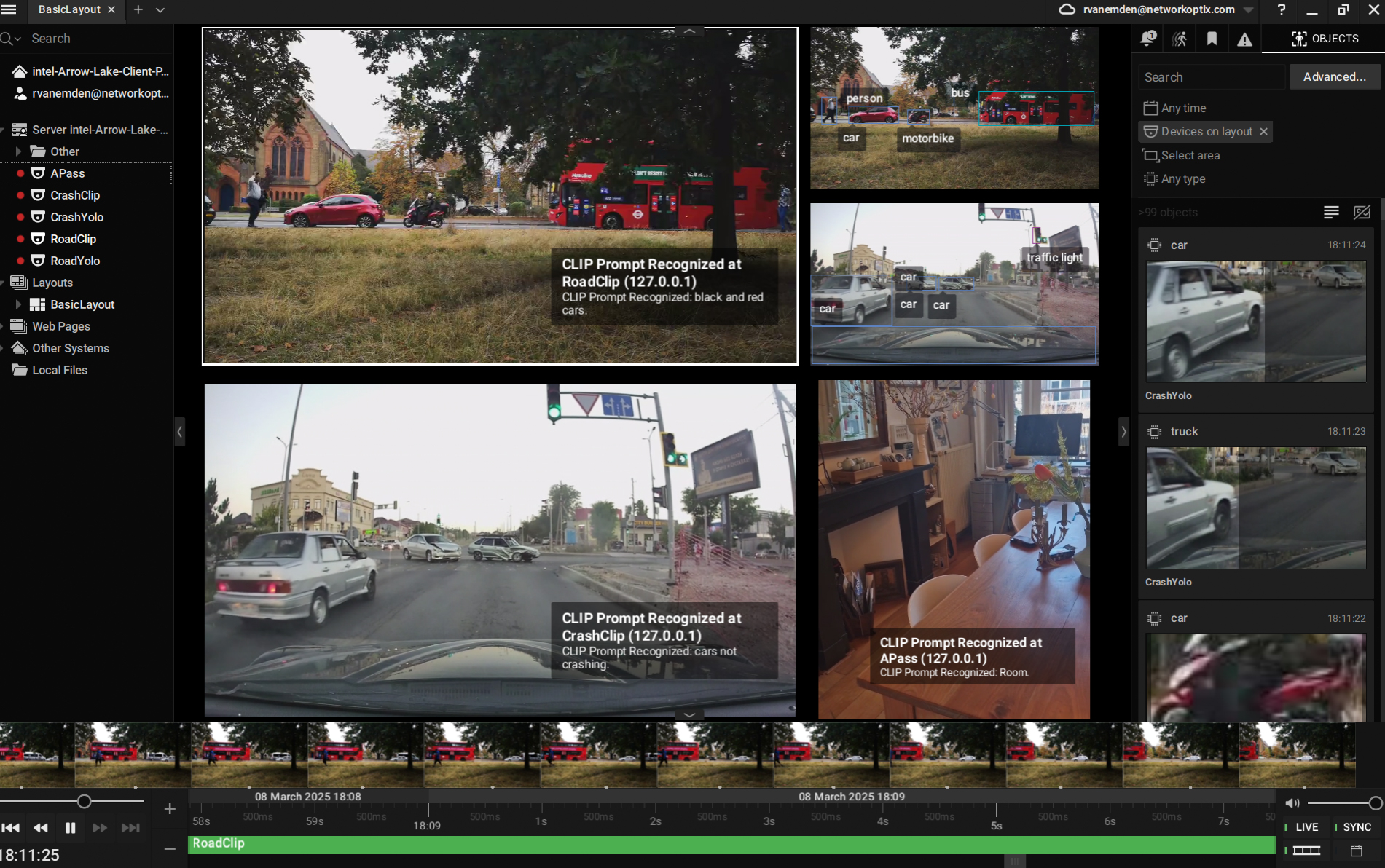

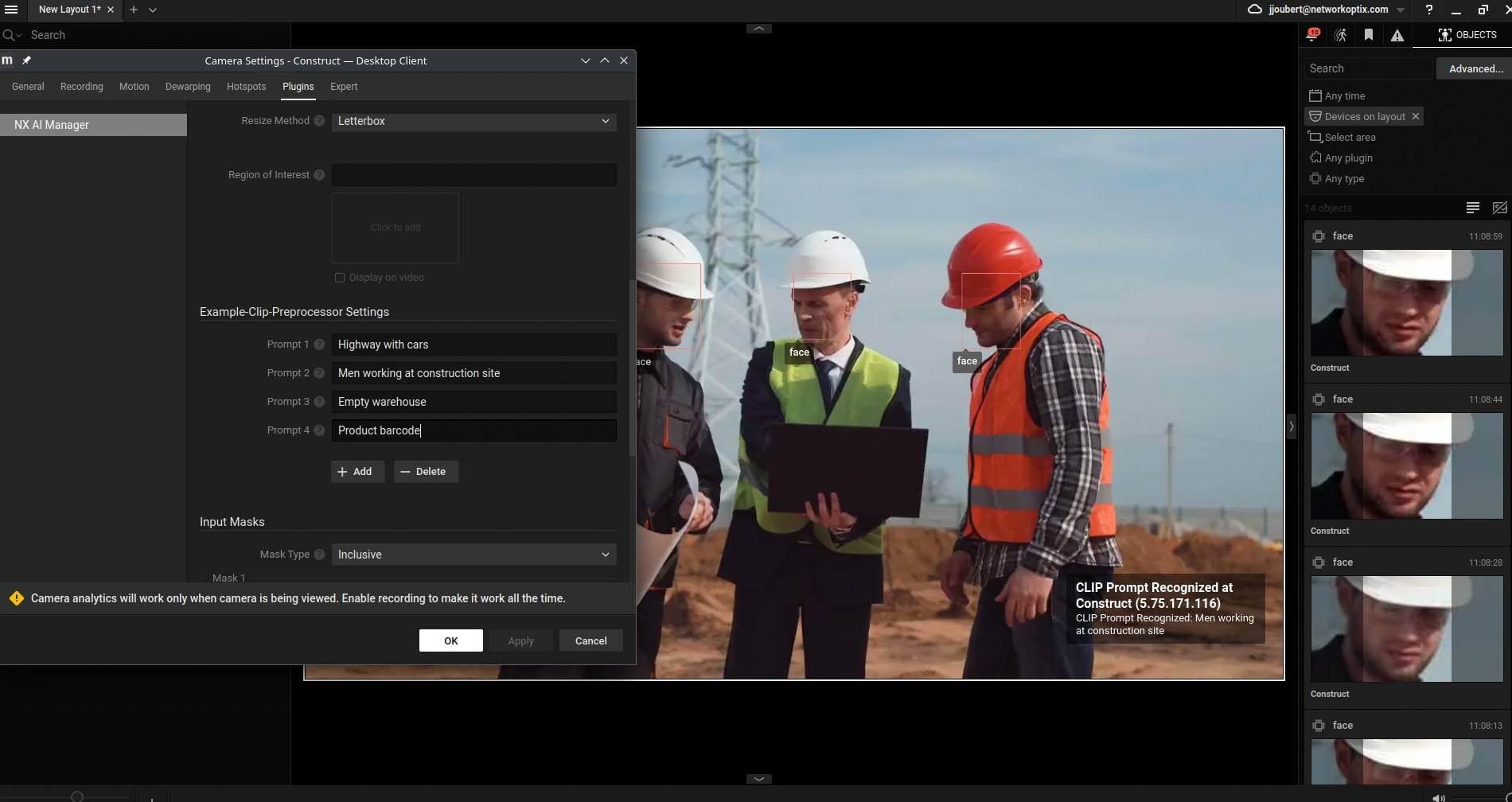

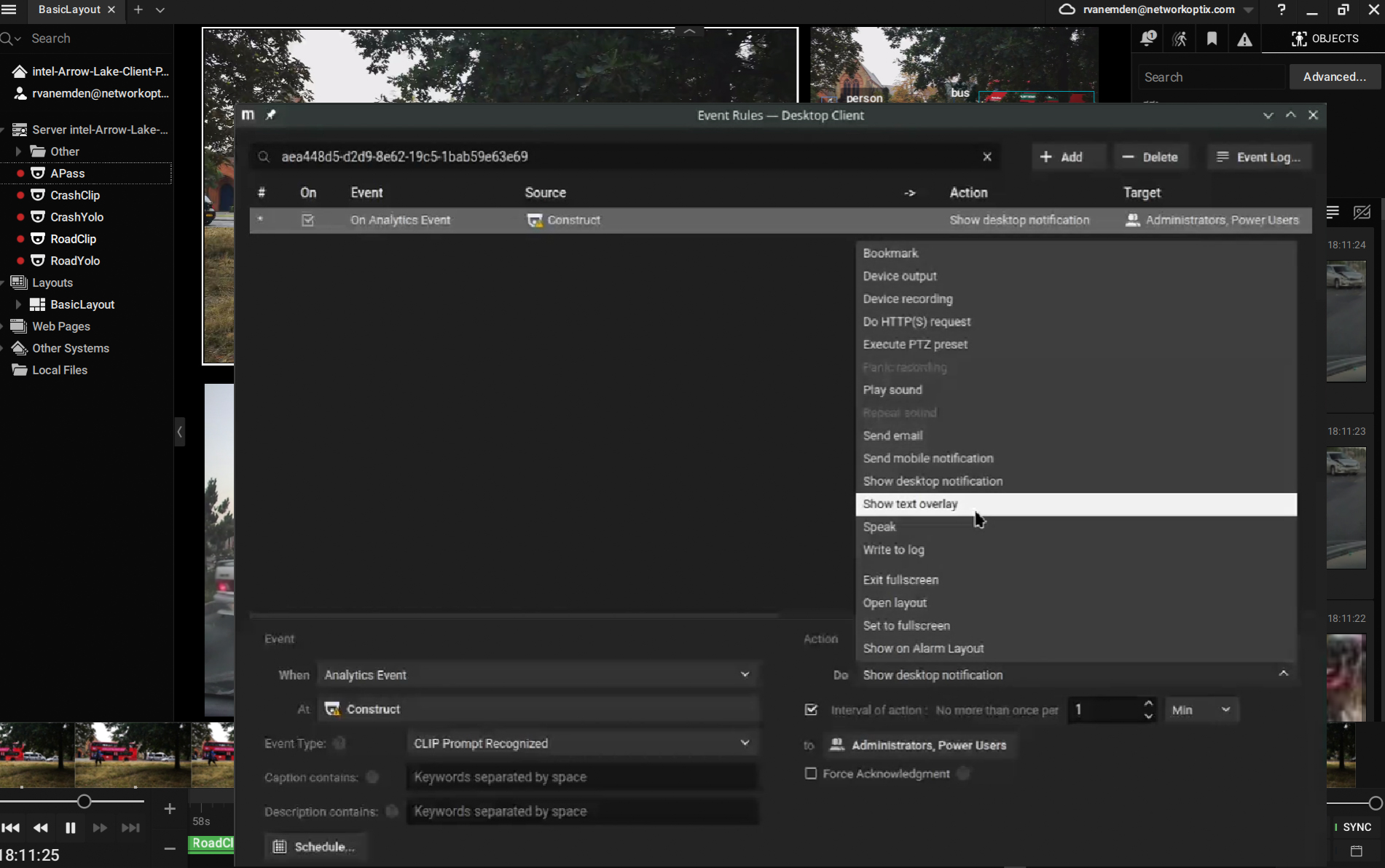

Customers that include large municipal traffic engineers or university campuses use drop-down menus to set filters within the platform to search for or set alerts for specific things such as traffic crashes or people fighting, for example. Within seconds, the AI scans all video feeds, live and recorded, for those parameters and pulls up only the relevant clips. It’s able to scan across multiple properties globally with no technical training needed; anyone from a receptionist to a security guard or administrator can use the system.

Network Optix platforms integrate OpenAI’s CLIP (Contrastive Language – Image Pre-training) technology. It’s a neural network that recognizes images and connects them to text descriptions. It can detect objects, colors, human behavior and people, with an important distinction: Network Optix does not perform facial recognition. The models can detect a human face, but it’s in terms of object classification to distinguish a human from a car or animal.

“If customers need to remotely look into their sites, they only need to look at the cameras the AI has chosen,” Cox says. “Not to mention privacy; a lot of customers don’t particularly want their video running to the cloud.”

Many existing security systems lack customization, relying on fixed parameters like car color or license plates. This leads to missed events, false alarms and wasted hours reviewing footage. Network Optix’s multimodal AI solution, powered by Intel Core Ultra 200H series, solves this with flexible, natural language queries, enabling smarter security monitoring.

Natural Language Prompt Prototype Debuts at Intel Vision

While Network Optix’s software can run on any chip architecture, Intel’s new Core Ultra 9 processor 285H delivers 1.35x faster throughput and lower latency1 running the company’s AI models compared with Intel’s previous-generation Core Ultra 7 processor 165H processor (code-named Meteor Lake).

For the 2025 Intel Vision event starting March 31 in Las Vegas, Network Optix makes its user interface even simpler. Engineers designed a prototype demo of a new natural language text prompt model using Core Ultra 200H processors. The cutting-edge, disruptive technology goes beyond clicking drop-down filters for video searches or to set alerts. This prototype uses a text prompt chatbot that understands natural language.

The demo runs several scenarios. For example, how traffic infrastructure managers can set prompts and real-time notifications to detect car crashes across hundreds or thousands of cameras. Traditional systems lack the flexibility to detect crashes without predefined rules. A video example shows a busy intersection, and the AI dynamically detects the moment a car crash is caught on video. The system automatically retrieves relevant footage and flags the user, reducing manual review time and improving safety response in high-risk areas.

Another scenario represents a lost passport left in a busy area. Using a simple text prompt “Find passport,” the AI-powered object recognition eliminates the need for large, labeled datasets dedicated to every possible object of interest. The AI scans the video feeds and finds the passport on a table, prompting an alert to the user once it’s spotted. It’s an example of Network Optix’s scalability – as new items or categories appear, the detection system can adapt promptly without extensive re-engineering, reducing retraining costs.

Cox says the prototype will be rolled out to Network Optix developers to start testing later this year.

1 Intel does not control or audit third-party data. You should consult other sources to evaluate accuracy.